The AI industry has converged on two modes. AI as Copilot: the human drives, the AI assists with tasks. AI as Agent: the human sets goals, the AI executes autonomously. Both are valuable. Both share a structural flaw: they don’t improve human judgement over time. There is a third mode the industry is largely missing, because it’s harder to build and harder to market.

The Copilot keeps the human in the loop but doesn’t sharpen their thinking. You’re still doing the synthesis, still evaluating tradeoffs, still making the call. The AI made the grunt work faster, but your judgement is no better than before you had the tool.

The Agent removes the human from the loop for routine decisions. This is efficient until it isn’t. When an agent executes based on a decision trace, the human stops learning why. The reasoning muscle atrophies. Two years in, when the agent encounters a situation outside its training distribution, the human who’s supposed to intervene has lost the context to intervene well. This is the knowledge atrophy problem, accelerated by design.

The Orchestra, Not the Soloist

There is a third mode. It doesn’t have a catchy name yet because the industry hasn’t converged on it. I call it the Ensemble Collaborator.

The distinction is architectural, not just philosophical.

A Copilot is typically one model doing one thing: an LLM generating text, summarizing documents, or answering questions. An Agent is typically one model with tools: an LLM that can call APIs, execute code, and chain actions together. Both center on a single intelligence, usually a large language model, doing the work.

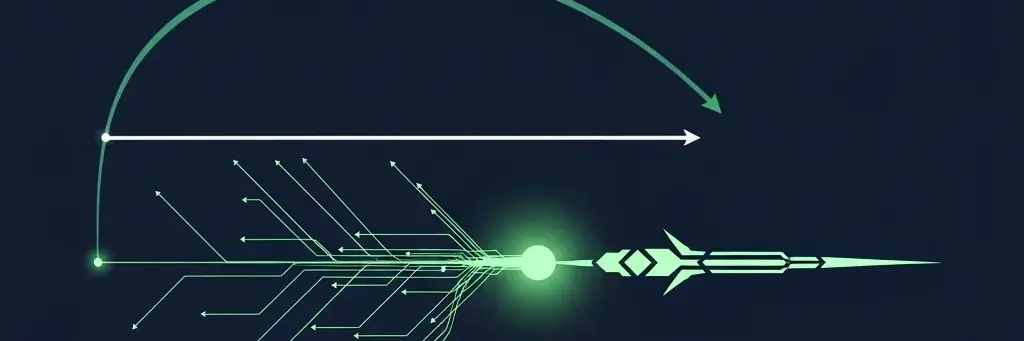

An Ensemble Collaborator is an orchestra. Different instruments for different parts of the problem, conducted together.

Monte Carlo simulation for scenario modeling. When you need to understand how a supply chain responds to a demand shock across 10,000 possible futures, you don’t ask an LLM to guess. You run the math.

Domain-specific ML models for forecasting. Demand prediction, lead time estimation, capacity utilization: problems where decades of statistical modeling outperform language models. The right tool is the proven tool.

Constraint engines for feasibility. When a decision has hard boundaries (warehouse capacity, working capital limits, regulatory requirements), you need deterministic logic, not probabilistic generation.

LLMs for synthesis and inquiry. Where language models excel is at the interface between the human and the system. Translating business questions into analytical framings. Synthesizing findings across multiple models into a coherent narrative. And most importantly: asking the right questions before providing answers.

The result is a system where no single model is the decision-maker. Each model contributes what it’s best at. The human remains the integrator, but an integrator whose judgement is informed by simulation, calibrated by historical accuracy, and challenged by structured inquiry.

Socratic Inquiry vs. Prompting

The most counterintuitive design choice in this third mode is that the system asks questions before it provides answers.

In the Copilot model, the human prompts and the AI responds. The quality of the output depends on the quality of the prompt. This is why “prompt engineering” became a discipline. The burden of framing the problem well falls entirely on the human.

In the Agent model, the human states a goal and the AI decomposes it into tasks. The framing happens inside the model. The human trusts the decomposition or doesn’t, but rarely sees it.

The Ensemble Collaborator inverts both patterns. Before the system runs scenarios or generates recommendations, it asks framing questions designed to surface assumptions the decision-maker hasn’t examined.

“You’re evaluating a capacity expansion. What demand growth rate are you assuming, and what would change your mind about that assumption?”

“Three of your last four product launches in this therapeutic area missed Year 1 targets. What’s different about this one?”

“Your scenario assumes current supplier lead times. Two of your top three suppliers have had disruptions in the past 18 months. Should we model that risk?”

These aren’t prompts from the human to the machine. They’re prompts from the machine to the human. The system is doing something an LLM alone cannot: it’s drawing on simulation results, historical patterns, and calibration data to identify the specific blind spots in this specific decision.

This is Socratic inquiry, not prompt engineering. The human’s thinking gets sharper before any recommendation appears. The system isn’t just doing work (Agent) or helping with a task (Copilot). It’s improving the quality of the question before attempting the answer.

Sharpening the Vector

Context is scalar, judgement is a vector. A vector has magnitude and direction.

The Copilot helps you gather scalars faster. You get your data points, your summaries, your drafts. But the direction of your decision, the vector, is unchanged. You’re still pushing in whatever direction your existing thinking was already pointed.

The Agent applies its own vector. It decides the direction and magnitude based on its training and the context it can access. This is powerful for routine decisions with clear precedent. It’s dangerous for strategic decisions where the direction itself is the hard part.

The Ensemble Collaborator sharpens the human’s vector. Through Socratic inquiry, it challenges the direction before the decision is made. Through simulation, it stress-tests the magnitude against thousands of possible futures. Through calibration, it adjusts for the known biases of the people in the room. The human still chooses the direction. But the choice is informed by simulation, tested against futures, and calibrated against track records in ways neither a Copilot nor an Agent can offer.

The result is a system that doesn’t replace human judgement and doesn’t merely assist with tasks. It compounds human judgement over time. Every decision captured, every prediction scored, every assumption tested feeds back into the system’s ability to ask better questions and surface better challenges next time.

Why This Mode Is Harder to Build and Harder to Market

The industry focuses on Copilots and Agents because they’re easy to explain.

“AI that helps you write faster.” Everyone understands that. “AI that handles your workflow autonomously.” The demo writes itself.

“AI that asks you hard questions before you make a decision, using an ensemble of Monte Carlo simulation, domain-specific ML, constraint engines, and language models, calibrated against your organization’s historical accuracy patterns.” That’s a harder pitch.

The difficulty of explaining it is precisely why the opportunity is large. Copilots are commodity infrastructure. Every major platform offers one. Agents are rapidly commoditizing too. The frameworks are open, the patterns are established, the race is to execution speed.

The Ensemble Collaborator isn’t a feature you bolt on. It’s an architectural commitment to building systems that make human judgement structurally better rather than structurally unnecessary. It requires domain expertise to know which models to orchestrate. It requires decision science to design the Socratic inquiry. It requires years of calibration data to tune the system’s ability to challenge assumptions productively.

This is the mode that builds the judgement graph. It actively engages humans in better reasoning and records the structure of that reasoning for future use.

The next decade of enterprise AI will be defined by which companies make the best decisions, and whether they built the infrastructure to keep getting better at it.